Installing and Configure Solr Cloud

To provide high availability and increase uptime, SOLR Cloud was considered as an option to provide high uptime on one of ARDC's primary functions. SOLR powers the RDA Registry and the Portal software by providing search functionality, quick lookup on indexed records and general functionality of the Portal.

Basic setup and knowledge base for SOLR Cloud can be found at https://cwiki.apache.org/confluence/display/solr/SolrCloud

This tutorial assumes that you will install the minimum required for the SOLR Cloud with 3 servers, running 3 zookeepers, 3 solr instances and 2 collections that will have 3 replicas each (resting on 3 servers accordingly)

For the sake of simplicity, we will refer to these servers as server1, server2 and server3

Oops, Diagram Unavailable

This diagram can't be displayed. It may have been moved or deleted, or the page's access settings may be preventing it from loading. Pages with restricted access must also include Gliffy Diagrams in their allowed list.

Open Ports on the 3 servers

These ports are required by default by the zookeepers to communicate 2888, 3888 and 2181. Open port 8983 for the SOLR instance so that they can communicate to each other

Installing ZooKeeper on the 3 servers

cd /opt

curl -O http://apache.mirror.digitalpacific.com.au/zookeeper/zookeeper-3.4.6/zookeeper-3.4.6.tar.gz

tar zxvf zookeeper-3.4.6.tar.gz

rm -rf zookeeper-3.4.6.tar.gz

cd /opt/zookeeper-3.4.6

mkdir data

cp conf/zoo_sample.cfg conf/zoo.cfgGive the server and id , For eg. server1 will have an id of 1

touch data/myid

echo 1 > data/myid # 2 for server2 and 3 for server 3Edit the conf/zoo.cfg to contain reference to the 3 servers

server.1=server1:2888:3888

server.2=server2:2888:3888

server.3=server3:2888:3888Start zkServer on the 3 servers using

bin/zkServer.sh startInstall SOLR in cloud mode

cd /opt

wget http://archive.apache.org/dist/lucene/solr/5.4.0/solr-5.4.0.tgz

tar -zxvf solr-5.4.0.tgzStart SOLR in cloud mode

/opt/solr-5.4.0/bin/solr start -c -s /opt/solr-5.4.0/server/solr -m 1g -z server1:2181,server2:2181,server3:2181SOLR should now start at http://localhost:8983/solr and when viewing the Cloud tab on SOLR interface, you will not see anything yet, because no collections are created. To create a portal collection

# do this on server1

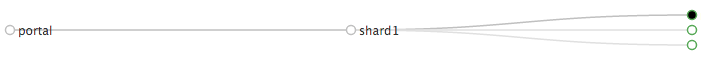

/opt/solr-5.4.0/bin/solr create -c portalThis will automatically create a new collection with the default data_driven schema and spawn a new shard and a new replica sitting on server1.

To spawn a new replica on server 2 and 3 respectively,

curl -XGET http://server1:8983/solr/admin/collections?action=ADDREPLICA&collection=portal&shard=shard1&node=server2:8983_solr

curl -XGET http://server1:8983/solr/admin/collections?action=ADDREPLICA&collection=portal&shard=shard1&node=server3:8983_solrIn the Cloud tab, you should see something similar to the following

With the first dot being the server1 as an active leader.

What makes it high availability?

Adding records to server1:8983 will almost immediately make it accessible on server2:8983. If 1 server goes down, the others will still have the entire collection intact. If the server comes backup, it will go into a recovery stage before it can accept new document again. Schema changes are also automatically propagate to the other replicas almost immediately.

Troubleshooting

Zookeepers are not communicating correctly

Monitor the zookeeper.out file to see if they are communicating correctly, if they are not, there will be reference on which server it's having trouble communicating to. Make sure the port 2888 and 3888 are open on both ends.

SOLR instances can't talk to the zookeepers and/or can't talk to one another

Monitor solr.log for any errors. Ensure port 8983 and port 2181 are open and accessible from the other end.